Sentic Computing

With the recent development of deep learning, research in artificial intelligence (AI) has gained new vigor and prominence. Machine learning, however, suffers from three big issues, namely:

1. Dependency: it requires (a lot of) training data and is domain-dependent;

2. Consistency: different training or tweaking leads to different results;

3. Transparency: the reasoning process is uninterpretable (black-box algorithms).

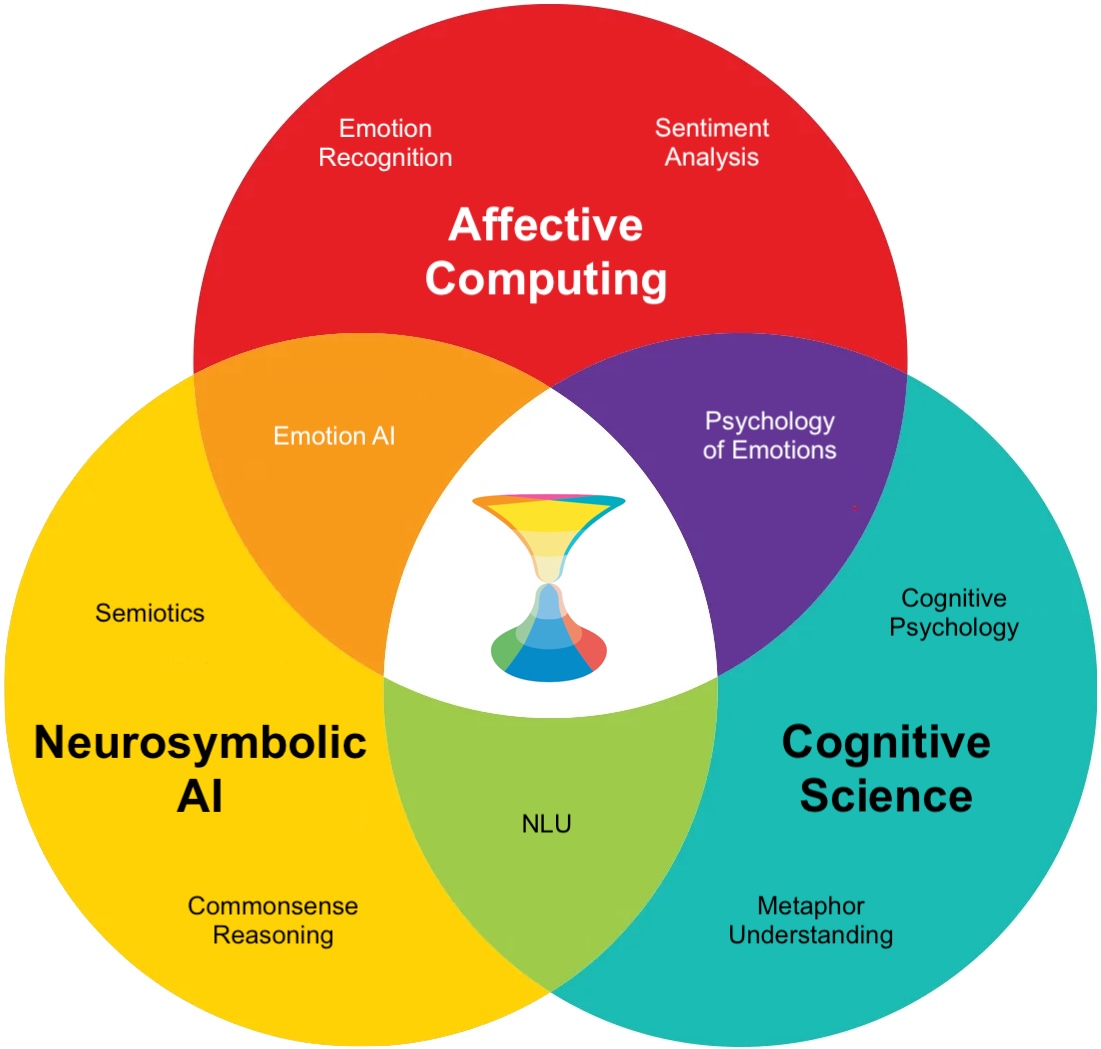

At SenticNet, we address such issues in the context of natural language processing (NLP) through a multidisciplinary approach, termed sentic computing, that aims to bridge the gap between statistical NLP and many other disciplines that are necessary for understanding human language, such as linguistics, commonsense reasoning, semiotics, and affective computing. Sentic computing, whose term derives from the Latin sensus (as in commonsense) and sentire (root of words such as sentiment and sentience), enables the analysis of text not only at document, page or paragraph level, but also at sentence, clause, and concept level. This is enabled by an approach to NLP that is both top-down and bottom-up: top-down for the fact that sentic computing leverages symbolic models (such as semantic networks and conceptual dependency theory) to encode meaning in an explainable way; bottom-up because we use sub-symbolic paradigms (such as multitask learning and prompt-based learning) to infer syntactic patterns from data.

Coupling symbolic and sub-symbolic AI is key for stepping forward in the path from NLP to natural language understanding. Relying solely on machine learning, in fact, is simply useful to make a 'good guess' based on past experience, because sub-symbolic methods only encode correlation and their decision-making process is merely probabilistic. Natural language understanding, however, requires much more than that. To use Noam Chomsky's words, "you do not get discoveries in the sciences by taking huge amounts of data, throwing them into a computer and doing statistical analysis of them: that’s not the way you understand things, you have to have theoretical insights".

Over the last decade, sentic computing has positioned itself as a horizontal technology that serves as a back-end to several applications in many different areas, including: e-business (e.g., to help businesses understand their target audience’s preferences and tailor their offerings and marketing strategies accordingly), e-commerce (e.g., to identify popular items and trends, update product listings, adjust prices, and address customer concerns), e-tourism (e.g., to enable travel companies to gauge travelers' satisfaction and identify areas for improvement), e-mobility (e.g., to identify potential customers and address concerns related to electric vehicle adoption, such as range anxiety, charging infrastructure, and cost), e-governance (e.g., to develop targeted communication strategies, enhance public services, and address pressing issues), e-security (e.g., to help organizations monitor and detect potential security threats and vulnerabilities), e-learning (e.g., to create personalized learning experiences by understanding learners’ preferences, learning styles, and interests), and e-health (e.g., to monitor public opinion on various health issues, such as vaccination and mental health, and develop targeted communication campaigns and raising awareness).

State-of-the-art performance in all these sentiment analysis applications is ensured by sentic computing's new approach to NLP, whose novelty gravitates around three key shifts:

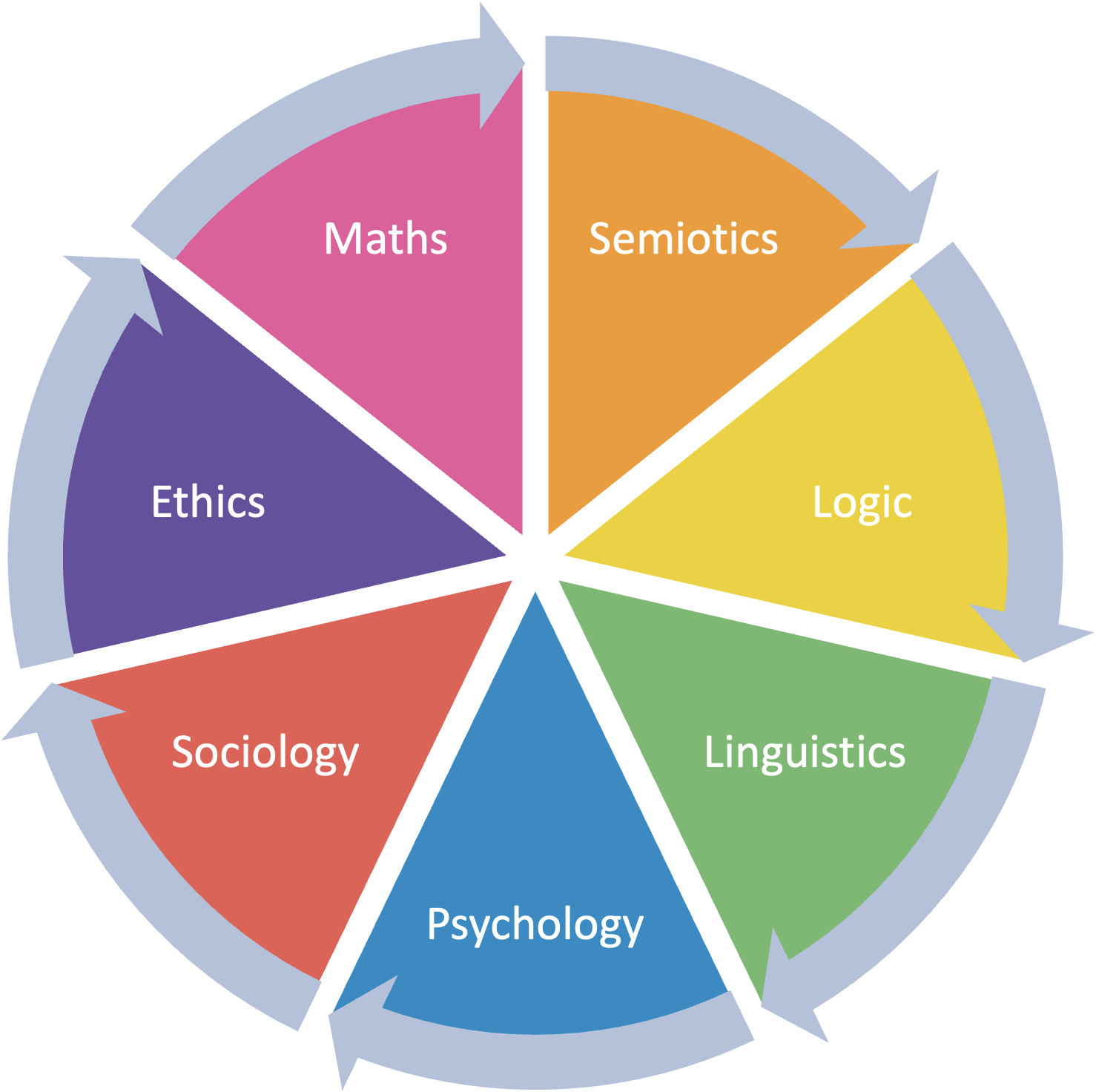

1. Shift from mono- to multidisciplinarity – evidenced by the concomitant use of symbolic and subsymbolic AI, for knowledge representation and reasoning; semiotics, for meaning encoding and decoding; mathematics, for carrying out tasks such as graph mining and multidimensionality reduction; linguistics, for discourse analysis and pragmatics; psychology, for cognitive and affective modeling; sociology, for understanding social network dynamics and social influence; finally ethics, for understanding related issues about the nature of mind and the creation of emotional machines.

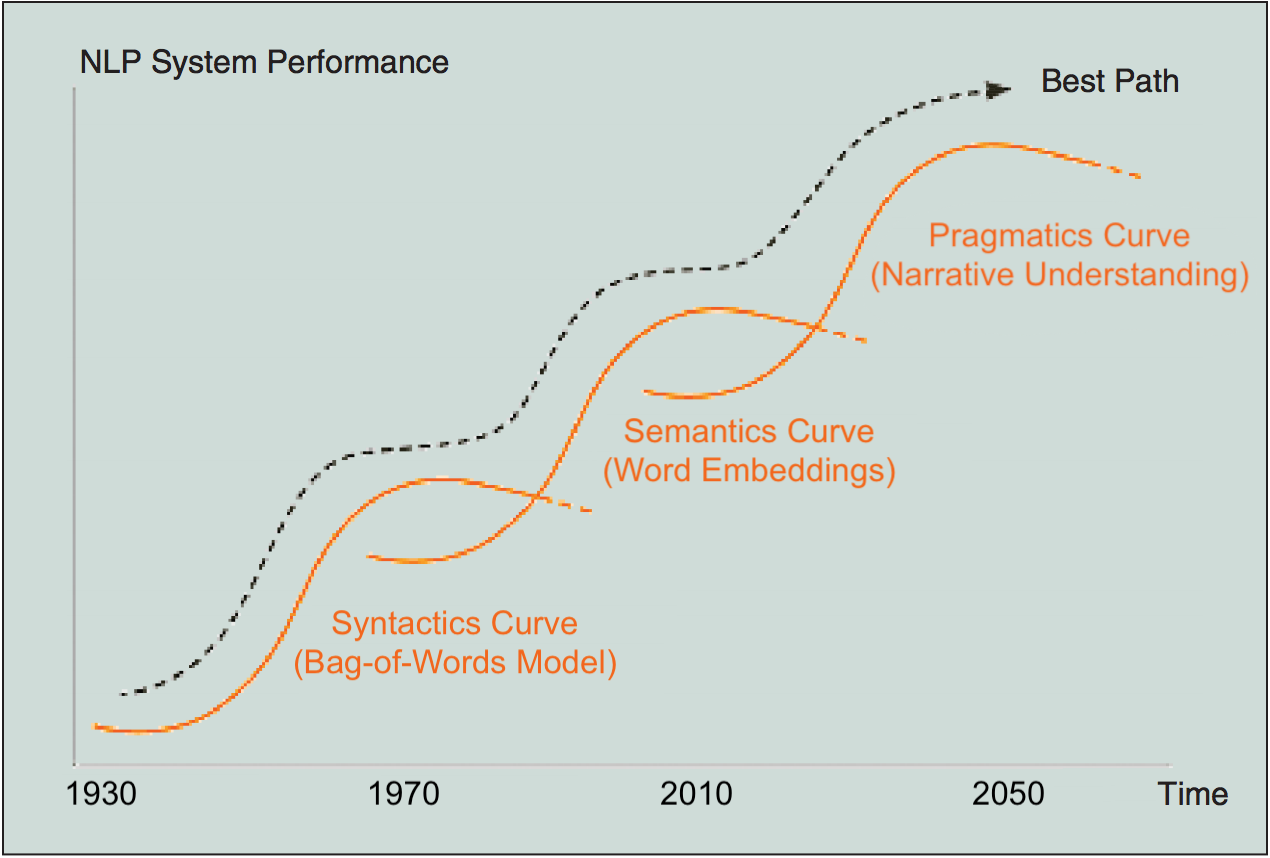

2. Shift from syntax to semantics – enabled by the adoption of the bag-of-concepts model in stead of simply counting word co-occurrence frequencies in text. Working at concept level entails preserving the meaning carried by multiword expressions such as cloud_computing, which represent ‘semantic atoms’ that should never be broken down into single words. In the bag-of-words model, for example, the concept cloud_computing would be split into computing and cloud, which may wrongly activate concepts related to the weather and, hence, compromise categorization accuracy.

3. Shift from statistics to linguistics – implemented by allowing sentiments to flow from concept to concept based on the dependency relation between clauses. The sentence “iPhone is expensive but nice”, for example, is equal to “iPhone is nice but expensive” from a bag-of-words perspective. However, the two sentences bear opposite polarity: the former is positive as the user seems to be willing to make the effort to buy the product despite its high price, the latter is negative as the user complains about the price of iPhone although he/she likes it.

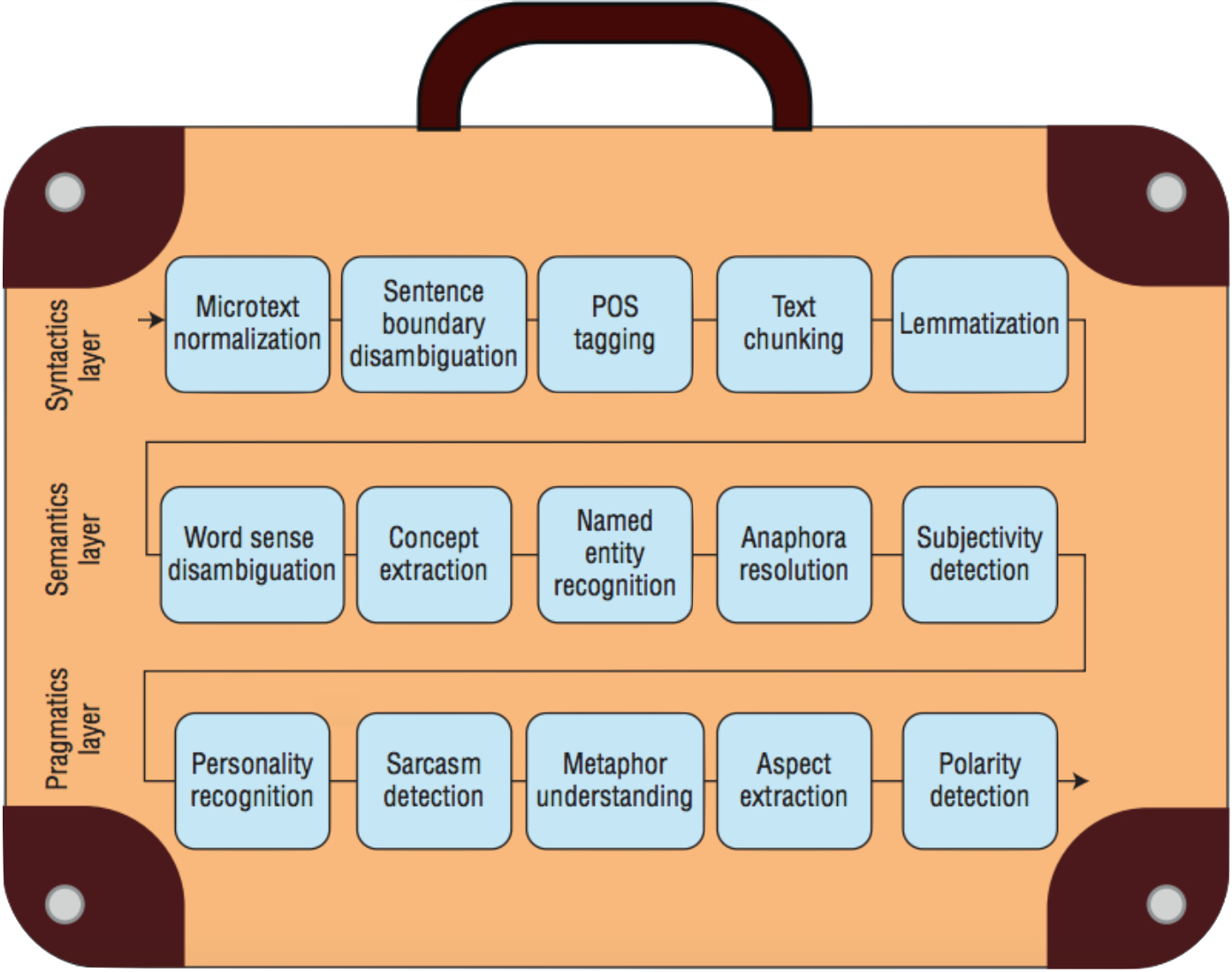

Sentic computing takes a holistic approach to natural language understanding by handling the many sub-problems involved in extracting meaning and polarity from text. While most works approach it as a simple categorization problem, in fact, sentiment analysis is actually a suitcase research problem that requires tackling many NLP tasks. As Marvin Minsky would say, the expression 'sentiment analysis' itself is a big suitcase (like many others related to affective computing, e.g., emotion recognition or opinion mining) that all of us use to encapsulate our jumbled idea about how our minds convey emotions and opinions through natural language. Sentic computing addresses the composite nature of the problem via a three-layer structure that explicitly handles important NLP tasks such as named entity recognition, personality detection, metaphor understanding, and aspect extraction, which other NLP classifiers are instead forced to implicitly handle without proper training.

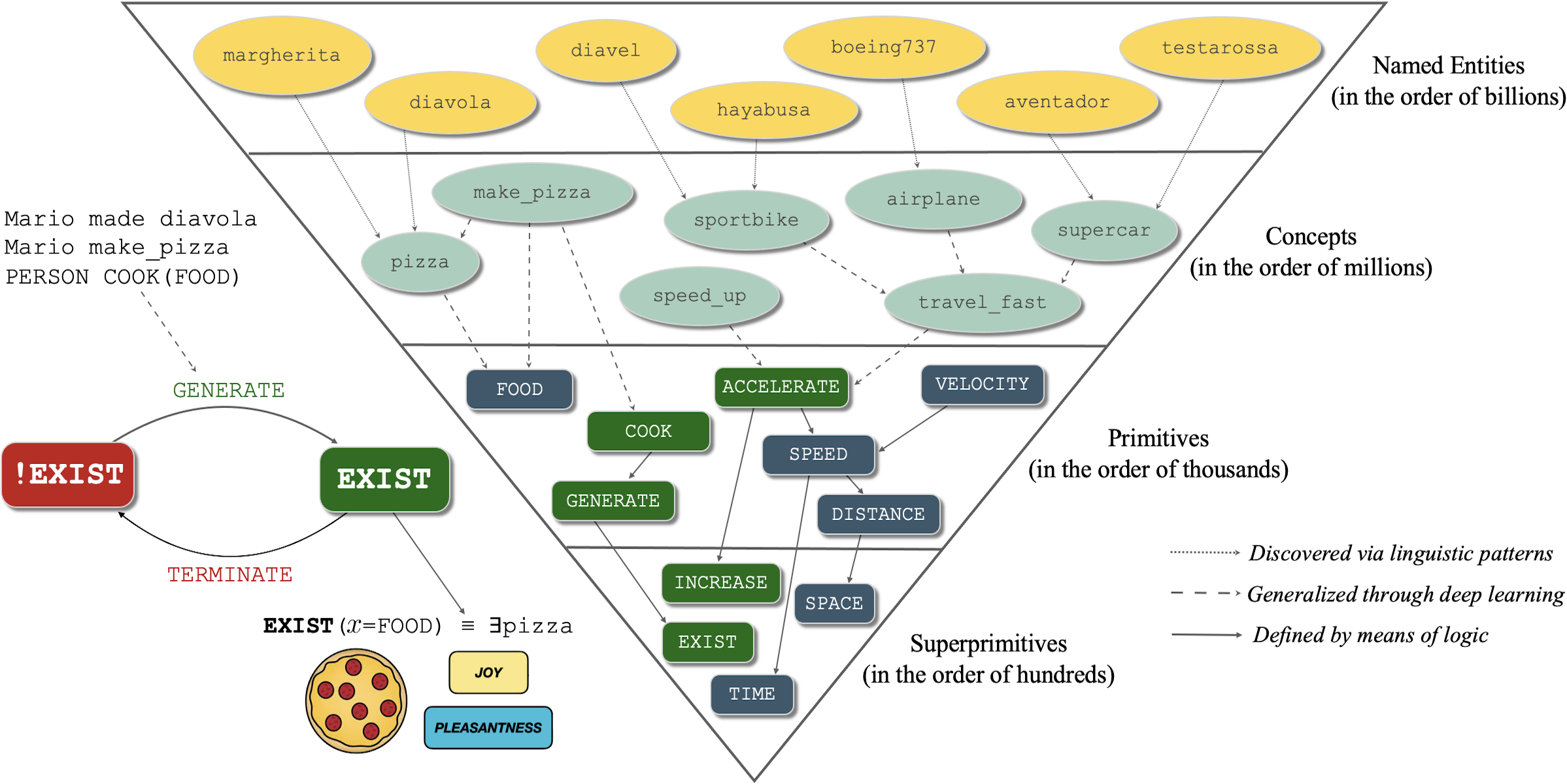

The core element of sentic computing is SenticNet, a large commonsense knowledge base built via an ensemble of symbolic and subsymbolic AI tools. In particular, SenticNet uses deep learning to generalize words and multiword expressions into primitives, which are later defined by means of logical reasoning in terms of superprimitives. For example, expressions like purchase_samsung_galaxy, shop_for_iphone, or buy_huawei_mate are all generalized as BUY(PHONE) and later deconstructed into smaller units thanks to definitions such as BUY(𝑥) = GET(𝑥) ∧ GIVE($), where GET(𝑥) is defined in terms of the superprimitive HAVE as the transition from not having 𝑥 to having 𝑥, i.e., ! HAVE(𝑥) → HAVE(𝑥). The superprimitive HAVE, finally, is defined by means of first order logic as HAVE(subj, obj) = ∃ obj @ subj.

This way, sentic computing is one step closer to natural language understanding and is superior to other purely statistical NLP frameworks that do not truly encode meaning. Moreover, the sentic computing framework is reproducible (because each reasoning step can be explicitly recorded and replicated through each iteration), interpretable (because the process that generalizes input words and multiword expressions into their corresponding primitives is fully transparent), trustworthy (because classification outputs, e.g., positive versus negative, come with a confidence score), and explainable (because classification outputs are explicitly linked to emotions and the input concepts that convey these).